When we come to the eight working models, we leave philosophical deep questions and become more concrete. This is about what to do in practical terms and how to do it. However, we are still at a relatively general and strategic level. The models can be said to constitute general principles that we then try to follow and be inspired by in our practical work with data collection and data analysis.

The first of the eight working models describes what is meant in this book by a task: a hypothesis about an action that might create value for learners. The closely related concept of actions is then taken from action research: action-based interventions staged by researchers to develop an organisation while trying to learn something about a more general issue. The classic scientific experiment is given a new and more social science-like form: social situations “provoked” in the classroom. Design principles describe what needs to be done to achieve a desired effect in a given situation and thus facilitate the bridging of theory and practice. Fine-grainedness is a principle that means that teachers’ own learning (theory focus) and teachers’ value creation for students (practice focus) need to be mixed in everyday life every week or two. Protocols are used to ensure teachers’ accountability in school development and to follow up in a structured way that all teachers receive formative feedback on their reflections. Written collegial learning is an alternative to the usual oral collegial learning and allows for a better balance between depth of understanding, time spent analysing and dissemination. Confidentiality means that not everyone reads everything, but rather that sensitive information is shared on a limited basis in small groups.

Task hypotheses – What can help students?

Now we come to perhaps the most important part of designed action sampling as a method, namely the action-oriented tasks that teachers receive from those who lead the research work (teacher colleagues, school leaders or experts) and which all teachers are then expected to try out in their own classrooms or lecture halls. After all, it is only when something is done differently in the classroom that there is the slightest prospect of better development for students. An all-important change in teachers’ thought processes that does not lead to a change in classroom practice can never reasonably create value for students.

At the same time, tasks to teachers are also perhaps the most challenging and difficult part of designed action sampling. It cannot be emphasised enough how sensitive and difficult it is to advise others on how to do their job better. At the first sight of a person who thinks they know what teachers need to do differently, many teachers become wary. This is natural, given the number of times teachers have to endure unsolicited advice with little relevance to their daily lives. Teachers have many opportunities to practise listening politely and perhaps even dutifully acknowledging important visitors, often with fancy titles, when they speak in the school auditorium. Then teachers go back to their classrooms and ignore the advice given, often deliberately and with good reason. Teacher Roberth Nordin (2017, pp. 19, 27) jokingly describes a typical professional development day:

“The speaker offers a great solution to a problem you don’t have. You get something that you never asked for or ever needed. You usually end up with at least a couple of newly invented tasks to squeeze into your schedule. […] The advice is endlessly varied, but the message is the same: here you get more to do, it’s good if you do it with a smile on your face. And if it doesn’t work right away, try new approaches.”

To understand the delicate nature of the situation, it is worth drawing a parallel with other situations in life when people receive advice they have not asked for, see Figure 6.1. For example, parents receiving advice on how to look after their child, from in-laws or from other parents. Or perhaps even from people who do not have children themselves. Like the guest lecturer in education who has never worked as a teacher.

Figure 6.1 Three cartoons by teacher Erik Johansson, illustrating how badly people dislike unsolicited advice.

One solution to the problem of unsolicited advice is to be inclusive and rigorous in the process of deciding which value-creating tasks should be tested by all teachers. Even when experts possess respectable and undisputed insight, it is perfectly reasonable to allow those teachers who will later test a set of expert advice to be involved in some way in formulating, selecting, or at least having a say in which actions to test.

You can also allow teachers to formulate their own tasks, which are then tested by everyone in the department or school. In any educational organisation, the collective pedagogical expertise is both broad and deep. There are probably also many teachers around Sweden who need to let go of the Jante law (don’t think you’re so smart and special) and take on the role of peer learning leader – to “lead colleagues’ professional learning and development [to] create good development, learning and teaching in all classrooms – not just their own” (Rönnerman et al., 2018, pp. 25, 28). Designed action sampling has been shown to contribute to more people taking that step, see example 3 below.

Another solution to the problem of unsolicited advice is to be careful to constantly emphasise that every assignment is just a hypothesis. According to pragmatism, no one can know whether an activity will work well for a particular teacher in a particular classroom. Each action-oriented task test that each teacher undertakes is therefore its own little experiment that can have both varied and unexpected outcomes. Calling a task a hypothesis is also a good way for an expert or fellow teacher to show humility towards the complexity of other people’s reality. Despite the expertise or long experience on which the task may be based, you can never be sure how well it will work for others.

A third solution is to see the journey of testing mission hypotheses as a collective and long team journey. This does not involve deduction – an expert, like a parent-in-law, telling everyone what “must work”. Nor is it a question of induction – that a teacher “knows” what works for other teachers. Rather, it’s abduction – teachers, school leaders and experts working together and persistently moving between theory and practice. With a good deal of creativity and humble intuition, they try to find out together what could help many teachers and students in their own school or organisation.

No matter how you choose to approach the problem of unsolicited advice, it is a problem that educators need to address. It is not compatible with either educational laws or professional ethics for teachers to reject ideas they themselves do not immediately like. So when Nordin (2017b, p. 97) suggests that teachers should “be left alone without politicians, pundits and other less knowledgeable people telling them how the noble art of teaching should be applied”, this is neither realistic, reasonable nor legal. Instead, every educational institution needs processes in which many teachers, even sometimes reluctantly, continuously and systematically try out new ideas in their own teaching. These can be ideas from colleagues, from experts and from others.

Task hypotheses are thus probably an indispensable part of everyday life in every educational institution with ambitions to create as much value for students as possible. The systematic development work needs to focus on jointly designing or selecting a reasonably large set of value-creating tasks each term, which all the teachers concerned try out in their classrooms or lecture halls and then document and analyse the effects.

Example 3: Empowering employees in pre-schools

A training programme for 190 staff at 11 municipal preschools used designed action sampling as a method. Staff were invited to five lectures in 2018 on tools for better co-operation, communication, leadership and conflict management. At each meeting, fifteen minutes were given for individual written reflection immediately after the lecture. Between each session, staff were given action-oriented tasks to carry out in the workplace and then reflect on. The tasks could involve giving a colleague developmental feedback, helping a colleague out of their victim mentality or trying to replace colleagues’ disappointment with others with clear agreements on change. Tags captured effects such as “Good tool”, “Good co-operation” and “Motivated others”.

Many were unused to reflecting in silence and wondered what kind of text they were expected to write. Such doubts disappeared over time. Towards the end of the exercise, participants realised that they were writing for their own personal development, not for the ‘right’ answer. They learnt to reflect on their own development in a way they had not been able to do before. The tags provided much appreciated guidance for reflection.

In preschool, many are used to working together in groups. Here, however, it became more individual-centred. Each participant was allowed to reflect on their own thoughts and actions instead of being coloured by those who are usually the most vocal. The basic idea was that if everyone takes a small step forward in their own development, we will get further than if a few people take big steps forward. A stronger focus on the self provided a clearer individual responsibility to move forward in one’s own development.

The assignments were a successful element of the programme and consolidated the new knowledge among the participants. Getting feedback on their reflections from the project manager boosted their self-esteem and gave them the courage to talk more at the next meeting. Over time, the initiative led to more people stepping forward and applying for positions as unit developers. More people became more confident in their leadership in everyday work with children and carers. Many were strengthened in their conviction that they can make a difference and made more suggestions for improvements to the organisation.

Actions – teachers do action research on and together with each-other

Designed action sampling is essentially an action research method, as it involves collaboration between researchers and practitioners. Action research takes practice as its point of departure and aims primarily to improve the everyday life of professionals through various actions that are staged in practice.[1] Action research in schools is a major movement both in Sweden and internationally.[2] Opportunities include being able to conduct research that is more relevant to schools, being able to better bridge the gap between schools and universities, and being able to combine a need to build the knowledge base of the profession with a need to develop one’s own organisation and staff. Action research is a common strategy for working both with professional collegial learning and with a scientific basis in education. Olsson (2018, pp. 72-73) describes in a concrete way what this is often about in practical terms:

“Professionals formulate questions about their practice, stage an action, follow the process systematically and reflect on what happens. The process ends with some form of documentation. The knowledge of their own practice becomes a basis for further development and improvement work. Action research is conducted in a collective context, for example by starting with the team, which meets regularly and discusses the ongoing improvement work.”

However, action research is very difficult. The practical and scientific challenges are significant. Among researchers more generally, action research is therefore a contested and marginalised form of scholarship, both in education and in other sectors of society. A common criticism is that action research is rarely generalisable, publishable, rigorous or even relevant to a wider audience.[3] The methods often applied in action research are further criticised for being too subjective and with a weak focus on validity, reproducibility, the possibility of external criticism and links to previous research.

Action research is also criticised by practitioners. With so many options for how the research can be conducted, there is a great risk of the process being perceived as vague, anecdotal, fuzzy and ineffective. Collaboration between practitioners can also be perceived as difficult and forced. Being open and honest in the many and time-consuming oral reflection meetings is not natural for all participants.[4]

Designed action sampling can be seen as an attempt to address many of these challenges in a new way and as an attempt to make action research simpler and more powerful. Table 6.1 summarises ten classic features of action research, and how they are handled somewhat differently in designed action sampling. Through a clarified, more focused, more written, more individualised and more theory-oriented process, many of the challenges of action research can hopefully be better and more easily managed. Time and cost are also reduced when schools are not as dependent on an external researcher from a university. Teachers and educational managers can to a greater extent take on the role of research leader themselves. Time is also saved when much of the learning dialogue takes place in writing rather than orally. It is also easier to manage research in terms of scheduling when there is less need to bring together researchers, school leaders and teachers in many long oral discussions.

However, the key difference is that a mainly written and individual (but still collective) scholarship becomes more rigorously documented and thus more generalisable, more theoretically relevant, more reviewable and ultimately more publishable. Whether this benefit comes at the expense of the participants’ perceived relevance and personal development remains to be seen. However, the projects implemented so far do not suggest that this would be the case.

Table 6.1 Comparison between action research and designed action sampling

| Classic features of action research | Comparison with designed action sampling | How designed action sampling develops action research |

| Collaboration between researchers and practitioners | Researchers can participate, but practitioners can also do science on their own. | Clarified process removes requirement for researchers to be involved in the work, lowering costs |

| Actions aimed at changing practice | Actions aimed at creating value for learners | Narrowing the purpose clarifies that the focus is on student outcomes. |

| Based on practice and its problems | The starting point can be either theory, practice or design principles. | The focus shifts slightly towards theory, with a better balance between practice and theory. |

| Oral learning dialogue between practitioners in focus groups | Written, structured and confidential learning dialogue, with written confidential feedback. | Dialogue documented in writing and more confidential, research is more rigorous, time is saved |

| The aim is to improve practice rather than produce new knowledge. | Design principles provide better balance between practice and knowledge | New way of describing, disseminating and validating insights increases chance of generalisable new knowledge |

| There are many different ways to conduct action research. | Clear methodological choices, work processes and techniques for data collection and analysis. | Easier and less fuzzy for practitioners to participate, higher chance to make theoretical contributions. |

| Focus on problem solving and dialogue between researchers and practitioners | Focus on experiments and subsequent structured documentation and analysis. | Better able to meet critics’ high standards of scientific rigour and scrutiny |

| Democratic process where everyone is involved in formulating actions | The democratic aspect is mainly that everyone can document and analyse outcomes. | The requirement for consensus and dialogue has been slightly reduced, in favour of more rigorous data collection. |

| A wide range of different data collection methods | All data is collected via deep reflection forms after the action is completed | Great time saving yet improved analysis capacity through mixed structured data |

| Reflection takes place orally in a group, some time after the action has been completed. | Reflection is done individually, in writing and as soon as possible after the action has been completed. | More reflections are deeper and in the moment, and are not as tainted by other participants’ experiences. |

Experiments – we test in the classroom how it works

The scientific experiment is perhaps the most important scientific method of all time. Since Francis Bacon showed in the 17th century that observation is not enough, but that we must also manipulate our world to reveal its secrets[5] , the history books have been filled with famous experiments. Galileo, with a little help from the Tower of Pisa, demonstrated that a stone and a feather fall at exactly the same speed if ignoring wind resistance. Pavlov (1927) proved the phenomenon of the conditioned reflex by ringing a bell just before each dog meal, causing the dogs to salivate even when no food was served. Milgram (1963) proved that we humans are capable of injuring or even killing another human out of sheer obedience, by having volunteer participants administer what they thought were potentially lethal electric shocks to people screaming in pain, hidden behind a wall. However, no people were harmed in the experiment, as it was hired actors pretending to be in pain. Mischel et al (2011) proved that life is slightly better for those who have the willpower to wait for rewards. In the famous ‘marshmallow experiment’, they gave candy to young children, noted which ones were able to wait to eat the candy and then followed them in life for thirty years.

Experiments are also common in medical research. A common experiment is the randomised control trial. Participants are randomly divided into two different groups – one receiving active medicine and one receiving sugar pills. Various effects of interest are then monitored in the study, which is preferably ‘double-blind’ – that is, neither the doctor nor the patient knows whether the medicine or the sugar pill is being administered. Such a study is often called a ‘gold standard’ study, which suggests that many people think it is the best kind of experiment you can do.

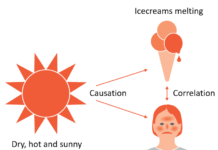

It is important in an experiment to be able to vary preferably only a single variable (called the ‘independent variable’) while keeping many other variables constant (called ‘control variables’), see Figure 6.2. It is only when such control over surrounding influencing factors is achieved, that causal relationships between cause and effect can be studied with high reliability in an experiment.

Figure 6.2 Experimental method illustrated with a simple example from biology.

In education research, there are strong differences of opinion on whether it is possible or appropriate to use experiments as a scientific method.[6] Proponents of experimentation believe that if we can just design the perfect randomised control trial, we can finally begin to build an increasingly strong body of evidence about what works in education.[7] Instead, critics argue that it is not possible to control the myriad of influencing variables involved in an ordinary and highly complex educational situation.[8] Social science, unlike natural science, also deals with meaning-making individuals who are constantly learning and thus constantly changing their behaviour from time to time.[9] What educational research tries to study – meaningful learning – is thus also what makes such research methodologically very difficult.

Within critical realism, the idea of randomised control trials in the social sciences is completely rejected. Sayer (2010, pp. 3, 116) writes:

“Social scientists are invariably confronted with situations in which many things are going on at once and they lack the possibility, open to many natural scientists, of isolating out particular processes in experiments. […] it is often said that progress is inhibited in social science by […] the impossibility of experiments.”

However, not all critical realists reject experimentation as a method. What is needed, however, is a different approach than the traditional experiment where variables are isolated in laboratory-like environments. Danermark et al. (2002, pp. 103, 105) label what is required a social experiment conducted in a natural environment, preferably via emotionally strong situations and with comparisons of many different outcomes with each other:

“The experimental element lies in the circumstance that the researcher consciously provokes a situation in order to study how people handle it. […] [A good method is] to compare several completely different interaction situations in order to be able to discern the structure all these cases have in common.”

In designed action sampling, task hypotheses are at the heart of the social experiment. The task written on the form that is handed out (see Figure 4.2) is a guide to teachers on what kind of situation or event they are expected to “provoke” in their classroom, and then reflect on based on different effects they saw. The more teachers who participate, the more likely it is that research leaders will be able to discern patterns in the structures and mechanisms found in many teachers’ reflections. The more people who participate in a social experiment, the less the risk that the research leaders’ own hopes for a positive result will unduly influence the analysis and conclusions. Instead, let the collected data speak for itself. Students can also contribute with reflections, see example 4 below.

If the social experimentation methodology of designed action sampling proves successful on a broad scale, we may even see a period of many new research advances in educational science. We can learn from the many breakthroughs in the history of science that we should not underestimate the potential of experimental methodology. Perhaps social experiments work better than traditional experiments in the field of education? If so, many teachers will have to take a more prominent role in educational science in the future. This is because working with social experiments in the classroom requires the active participation of students’ own teachers in science. The British researcher Pring (2010, p. 122) states:

“tentative beliefs or conclusions drawn by the teacher become hypotheses’ to be put to the test in the classrooms. Only the teachers can do that.”

Example 4: School-work interaction in secondary schools

Students can also benefit from participating in science. In one municipality, 150 8th graders from three different primary schools were involved in an impact study of a career fair organised by the municipality’s study and career counsellor. One of the secondary school teachers also worked differently with two different classes to identify differences in the new approach. One class was given the task of reflecting individually on various action-oriented assignments before and after the fair, while another class worked in a more traditional way.

Students were asked to write down their future thoughts on education and careers, to read up on different interesting careers, to write down their expectations before the fair and to write down their thoughts afterwards. Tags captured common effects such as “Know more about different professions”, “Made a choice”, “Increased interest in working life” and “More motivated to study”.

The teacher who compared two classes felt that the scientific method gave a much better insight into the students’ learning and that the students approached the fair in a different way. Both the students’ and the teacher’s learning was deepened. The dialogue with the students, where everyone could speak and then receive feedback from the teacher, influenced the teaching in connection with the fair. The teacher saw what needed to be followed up and was able to better adapt the teaching to the students’ interests and conditions. Afterwards, the teacher regretted not having used the new method with both her classes.

The method provided a depth of understanding of each student’s learning and individual development that cannot be achieved with, for example, exit tickets – student reflection after a lesson. Designed action sampling with students as participants allows teachers to drill down into the most intricate parts of teaching. When all students are able to verbalise their thoughts about concrete actions, the teacher can see hidden structures and group cultures in new ways.

The effects of different interventions in study and career guidance are a relatively unexplored area. Surveys can measure how satisfied a student is with an intervention, but not how learning takes place and why. Here, designed action sampling can be a promising method. Both teachers and career counsellors can gain access to new insights and more effective ways of working.

Design principles – the start and end of the whole research journey

Design principles is a concept derived from design science, a new and rapidly growing research tradition founded by scientist and Nobel Prize winner Herbert Simon.[10] The starting point for design science was the book The sciences of the artificial (Simon, 1969). Its main argument is that a different kind of science is needed for teachers, engineers, architects, lawyers, doctors and others who create artificial phenomena and situations in their profession. Anyone who acts to move from an existing situation to a more desirable situation is essentially working with design.[11] Simon noted that the traditional sciences, natural and social[12] , aim to analyse, describe and explain existing and naturally occurring phenomena, hence the name sciences of the natural. However, this is not enough when the aim is to recommend, prescribe, solve problems or create completely new phenomena. Therefore, a different scientific basis for such creation processes and a partly different scientific methodology are needed. With its focus on new phenomena and solutions that work in practice, design science is philosophically grounded in pragmatism[13] and abduction.[14] Table 6.2 compares traditional research with design science in more detail.

Design science does not replace traditional research, but is a complement that can strengthen the practical relevance of research by better bridging the gap between theory and practice. Such bridging is done through something called design principles. A design principle consists of four elements that help practitioners design a specific solution for their particular situation.

A well formulated design principle often comes in the form[15] : In situation A, if you want to achieve B, do C, because D. Four questions should be answered by a design principle[16] : 1) What to do? 2) In which situation? 3) To achieve what effects? 4) Why does it work? The fourth question is not always possible to answer, especially in early phases when there is not yet a deep understanding of why something works.

As organisations are complex and integrated by nature, design principles are often difficult to apply in isolation.[17] They are therefore usually described and applied in groups, as a coherent set of design principles for a particular overall purpose.

Table 6.2 Differences between traditional research and design science (the table is based on an article by Romme, 2003).

| Traditional research | Design science | |

| Object of study | Naturally occurring phenomena | Artificial phenomena created by human beings |

| Objectives | Analyse, describe and explain what exists today. | Creatively change, design and create things that don’t yet exist but should. |

| Result | Patterns, laws, relationships between different forces and variables | Recommendations, design principles, solutions, useful actionable knowledge |

| Form | “In a situation A, if B happens, then C often follows” | “In a situation A, if you want to achieve B, do C” |

| Research ideals | Objective, observational, analytical, unemotional | Pragmatic, action-oriented, situational, committed. |

| Challenges | How to be practically relevant? | How to conduct rigorous research? |

| Method for experiments | Controlled experiments | Pragmatic experiments |

| How the two can be combined | Design principles provide a starting point for dialogue and collaboration between traditional research and design science. The principles are tested in a series of pragmatic experiments and are also related to existing traditional descriptive research. | |

The process of developing design principles can start either with traditional research or from practice. Over time, the design principles are further developed and tested in various pragmatic experiments and become a mixture of perspectives and ideas from both research and practice. Well-developed design principles become a kind of written, codified and clarified knowledge around actions that have proven to work well in practice in certain specific situations. A set of design principles thus does not answer the more classic research question “What is true?”, but rather the more pragmatic question “What works in a particular situation?”.[18]

In designed action sampling, the concept of design principles corresponds to the action-oriented value-creating tasks with associated tags. Each task is a response to what is to be done, and the tags are responses to desired effects. Several tasks and tags can also be combined into a content package, see an example in Figure 6.3, taken from Example 1 in chapter 4. In practical terms, the content package is an understandable and useful form for disseminating, discussing, analysing, further developing and testing a set of design principles beyond one’s own educational institution.

Figure 6.3 Example of a content package for co-operative learning consisting of three tasks and eighteen tags. This package is a shortened version of a content package originally created by Niclas Fohlin and Jennie Wilson, and has been trialled in a number of schools around Sweden. Read more in example 1 in chapter 4.

Design principles play a central role in designed action sampling. This is illustrated in Figure 6.4, which builds upon the circular and abductive approach in Figure 5.4. Whether it is researchers or practitioners who start the circular work, writing down design principles in the form of a set of tasks and tags is one of the first steps.[19] Then you go round Figure 6.4, lap by lap, developing the design principles further. Each time you then want to report and disseminate insights, it is primarily the design principles, in the form of a content package, that you disseminate to outsiders and then hopefully receive feedback on. Thus, the work process begins and ends with the design principles.

The design principles also facilitate dialogue between educational researchers and practitioners.[20] The tasks and tags can be easily compared with what is written in traditional educational research literature. They can also be trialled in many different places, so that different outcomes can be compared. This is called a replication study, i.e., a different research team repeats a research study using the same methodology, but in a different location, to see if they get similar results.

Figure 6.4 How design principles bridge the gap between ideal and reality.

Fine-grainedness – theory and practice intertwined in everyday life

The idea of fine-grainedness is basically about time perspectives. With frequent alternations over time between teachers’ own learning of new ideas (theory) and everyday value creation for students (practice), we get a good work-learn balance, see Chapter 3. If no consideration is given to fine-grainedness, teachers’ own learning will instead be spontaneously separated from value creation for students, see Figure 6.5. Then we get collegial discussions, in-service training days and professional development efforts that risk not significantly affecting everyday teaching.[21] We get a poor work-learn balance. As we have previously stated, theory and practice are like oil and water. They separate spontaneously. They must be constantly mixed so as not to be separated from each other, just like oil and water in a vinaigrette.

Figure 6.5 An illustration of poor work-learn balance and the need for a fine-grained mix of theory and practice for teachers.

With some inspiration from geology, fine grain size can be categorised into four basic mixtures – here called boulders, rocks, gravel and sand, see Figure 6.6. For teachers working in a school without effective in-service training, the boulder model applies. The teacher training programme they attended is the only source of theory available to them in their practical work with pupils. Fortunately, there are probably few such schools today. Most schools are probably at the grassroots level, where study days are relatively evenly distributed throughout the year, every month or two.

Figure 6.6 Four different models of fine-grainedness of work-learn balance in working life.

Designed action sampling helps educators to reach the fine-grained sand level in a relatively simple way and thus achieve a better work-learn balance in everyday life. The theories and development ideas that the organisation believes in and wants to try out are broken down into many small parts by having a content package with a few different tasks (say 3-8) worked through by all relevant staff over a few months. Every week or two, teachers spend some time familiarising themselves with the background, carrying out a task and finally reflecting in depth on the outcome. A task form is thus completed every week or fortnight by each teacher and submitted to the peer learning leaders, who then provide feedback. After a few months, all the work is summarised and discussed and analysed in an analysis meeting.

Let’s illustrate this with an analogy from the world of chemistry. A surfactant is a surface-active chemical substance that allows liquids to mix that would otherwise not mix spontaneously. The surfactant lowers the surface tension between the two liquids. Surfactants are found, for example, in detergents and dissolve oil-based dirt in the wash water. The designed action sampling form (see Figure 4.2) can be compared to a surfactant, but for the two ‘liquids’ of theory and practice. A single A4 page on the teacher’s desk helps reduce the surface tension between theory and practice. The form links the teacher’s own learning (theory) with the creation of value for students (practice).

If the teachers had not received a form on their desks, many of them would probably have been so immersed in a hectic daily routine that they would not have thought about the development ideas that were on the agenda at last development meeting, until the next professional development day. In fact, in the educational development projects we have run on designed action sampling, many teachers have asked for a simple way to be reminded every two weeks of the small development steps they can – and really want – to take in their everyday lives. The form is such a reminder. A little nudge that makes it a little easier for teachers to actively choose to mix theory and practice in their daily lives. Nudging is about gently changing people’s behaviours and habits so that they are more in line with the attitudes they already have. The idea comes from behavioural economics[22] and was awarded the Nobel Prize in 2017.

Based on the idea of nudging, development work needs to be simplified and linked to concrete actions. Important information needs to be presented in a simple way, and too much information should be avoided. Finesse in development work therefore requires an exercise we can call elephant carpaccio[23] . A large elephant, such as a whole area of research or a whole book the teachers wants to work with, is sliced into many thin slices that can then be savoured one by one, every week or every two weeks. Educational managers may continue to distribute well-chosen literature to all teachers, but each book needs to be accompanied by content packages with task forms to help teachers balance the theoretical material on a practical level.

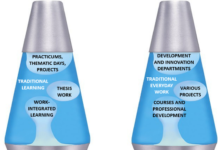

The idea of fine-grainedness is also applicable to students, see Figure 6.7. With a more fine-grained mix of theory and practice, they also get a better work-learn balance. An example of the boulder model is the degree project that many students have to do during the last four months of their programme. While thesis projects are often very instructive for students, they also illustrate how difficult it is for students to mix theory and practice in their daily lives. An example of the rock model is the two-week compulsory internship at a workplace organised for 15-year-old students in Sweden. Again, it is of course good that students get to be at a real workplace, but it is a pity that the experience is so rarely integrated in a fine-grained way into their regular education. The gravel model is exemplified by thematic days, insofar as students can then apply their knowledge in the real world utside the school area or campus. There are few good examples of the sand model beyond the rare examples of value creation pedagogy discussed in Part 1.

Designed action sampling can provide students with a more fine-grained experience by giving them action-oriented tasks where they are expected to apply different theoretical ideas in practice to create value for others. The same task form can then be used, in much the same way as for teachers. A good example of elephant carpaccio for students is vocational education, see example 9. Students’ practical activities at the workplace are broken down to a very fine level of detail. A question often used to slice the elephant is: [24]

“What do students need to do in order to learn what they need to know?“

Figure 6.7 Four different models of fine-grainedness giving an increasingly better work-learn balance for students.

Protocol – follow up on what is done and by whom

Collegial learning is an important and mandatory part of teachers’ responsibilities and professional ethics.[25] But teachers’ own learning is no less difficult than other people’s learning. For most people, personal development at work is a steep and emotional uphill struggle. Confronting your own preconceptions and beliefs can be a challenge.[26] Risking being criticised for your own practice can be uncomfortable.[27] Having to apply someone else’s pedagogical ideas can be upsetting and may even feel like an undue infringement of teachers’ pedagogical and professional freedom.[28] Many times, therefore, teachers simply choose to do nothing with the various development ideas that are served up to them based on decisions made in more or less democratic processes. Perhaps in spite of an inner awareness that it is probably against what’s deemed “good” or “right” to be unable or unwilling to develop oneself. Perhaps because there is a lack of confidence in the particular idea being tested.

That we humans are imperfect and do not always choose the ‘good’ path is widely recognised. Ask any priest and you’ll get an account of the history of original sin and modern interpretations of things like ambivalence, weakness of will, laziness and selfishness.[29] Teachers are probably no more or less imperfect than people in other professions. After all, we are all human. But for educational development, it is not only a pity that some choose not to take collective responsibility and participate fully in development work, it also has practical consequences that need to be managed. Educational developer Anders Härdevik (2008, p. 121) writes somewhat pointedly:

“Teachers’ view of democracy is actually synonymous with anarchy; I do as I please! […] This condition means that no significant decisions are implemented in practice. We change the label but keep the content.”

Therefore, methods to ensure teacher accountability are an important part of any educational development programme.[30] One way to deal with the desire for freedom and imperfection that probably exists in most organisations is assessment for teacher learning. Indeed, teacher learning also needs a focus on both formative and summative assessment. Teachers, like their students, need formative feedback on their own learning in order to develop further. Teachers, like their students, also need a friendly but firm summative assessment pressure to get things done, specifically the activities required for educational development to be effective. Otherwise, the hectic pressure of everyday life, and perhaps even laziness or selfishness, will too often win out over the fundamentally morally “good” and collective development issues. Then again, everyday routine work is also to be regarded as “good”. Everyday routine work and development work are two good things that always compete with each other in terms of resources, not only in education.[31]

One difference between teachers’ and students’ learning is that the uncertainty of what learning is likely to take place is usually greater for teachers. Therefore, a slightly different assessment strategy is needed. In designed action sampling, the main focus is whether or not teachers have carried out the agreed developmental action-oriented tasks. The question that the research leader asks a colleague is therefore not “Have you learnt the theory?” or “Have you achieved the intended effects?”, but instead “Have you tried the theory/idea in practice in your own teaching?” and “Have you reflected in writing on your experiences and lessons learnt afterwards?”. Thus, only two simple yes/no questions are required in the summative assessment.[32]

Thus, as a research leader, you can expect all participating teachers to try out the value-creating tasks in their classroom, but you cannot expect it to go well. You can also expect all teachers to reflect after completing the assignment, but you cannot expect all reflections to be “good”. There is no formula for what constitutes a good reflection. However, it is often easy to see what is a bad reflection. There are also many good tips on in-depth reflection. However, research leaders should not judge, evaluate or rate the quality of teachers’ reflections, even if such judgements are often made towards students. The purpose of testing hypotheses and reflecting is to be able to work scientifically together and to collect data that can be analysed in a structured way afterwards. The aim is not to examine teachers in the field of educational development. Rather, the summative assessment is about ensuring that everyone participates in the work in a responsible way and being able to support, and by all means forgive, those who are not really able or willing to participate. However, research leaders can ask questions in their feedback that encourage deeper reflection.

Different types of protocols are a common method of ensuring accountability in educational development.[33] A simple protocol for the summative assessment of designed action sampling is shown in Figure 6.8. Each time the research leader receives a completed form from one of the participants, the two yes/no questions on testing and reflection are considered to have been answered. A small check mark is then placed in the appropriate box in the protocol. Each teacher either has or has not yet completed and reflected upon each task that is part of a content package. Based on the protocol, the research manager can easily follow up with those who have fallen behind and may need extra support or adjustments in their work. The formative assessment takes place separately, via the form in Figure 4.2, and also orally if there is time.

Figure 6.8 Protocol for follow-up in designed action sampling.

The protocol for designed action sampling is an important part of the pedagogical leadership of educational managers and other peer learning leaders in development issues. What should actually happen if teachers for various reasons choose not to participate in the organisation’s collegial learning and educational development? There may be perfectly legitimate reasons, such as the teacher being overloaded, having personal challenges in terms of development work, or having a difficult personal life. Just like for students’ learning. Sometimes a little forgiveness may also be needed for those who are not always able to choose the “good” path, just as priests need to be able to meet us fellow human beings in our imperfection and offer forgiveness.

The method of working with protocols, yes/no questions and written reflections also leaves an opportunity for sceptical teachers to submit dissenting opinions in the development work. If you do not believe in a particular development idea, it is quite possible to write a reflection on how badly the idea worked in your own classroom and why you think it turned out that way. When all the participating teachers’ reflections have been received and compiled by the research leaders, it becomes clear whether a sceptic is in good company or not. The structured methodology of designed action sampling is the sceptical teachers’ main guarantee that bad ideas do not become long-lasting in their own organisation. A kind of democracy of ideas with teachers as “voters”. Few ideas will work for everyone, but some ideas can certainly work for many.

What is not acceptable in designed action sampling, however, is to dismiss a pedagogical idea without giving the idea a practical chance in one’s own classroom or lecture hall at least once. That all ideas are given the chance to make a difference for students in all participants’ teaching is ensured through the protocol and through the leadership of the peer learning leader and educational manager.

The need for protocols to monitor and ensure teachers’ participation became evident in the very first school development project where designed action sampling was applied, see example 5 below.

Example 5: Value creation pedagogy in a whole municipality

In 2013, the local government of one of Sweden’s larger municipalities launched an initiative to bring schools closer to the wider community. In this way, students’ motivation to study, creativity and self-confidence would be strengthened. More students would be encouraged to succeed at school and become involved in the development of society.

A dedicated project group was set up in 2014 and given the task of building on the recent inclusion of entrepreneurship in the curriculum. Collaboration was to take place between the school and the outside world, especially other municipal organisations. Municipal employees were also supposed to become more entrepreneurial. The initiative was based on the municipality’s work on study and career guidance and on a conviction that something new needed to be tried in order to boost the school.

Value creation pedagogy became an important starting point in the work, as a broader view of entrepreneurship than just starting a business was requested in schools. It was also a concrete way for schools to interact with the rest of society.

A pilot programme on value creation pedagogy was carried out in the 2015-2016 academic year with sixty volunteer teachers from the municipality’s primary and secondary schools. The participants met three times during the academic year and were given action-oriented tasks to complete in between meet-ups. The assignments involved familiarising themselves with theory and literature, planning for value-creating elements in teaching and testing the approach in practice with their students. Tags captured effects such as “Read literature”, “Discussed with colleague” and “Worked actively with students”.

The final session was emotionally powerful with both tears and laughter. The teachers’ students had been invited and spoke passionately about their experiences of learning from interacting with the outside world. Subsequently, the project was extended by three years and extended to cover an entire school district, involving around 100 teachers and school leaders from three primary schools, who made around 1,000 reflections on completed assignments. Here, too, the assignments involved familiarising themselves with theory and literature, discussing with colleagues and carrying out practical tests with students. The practical tasks had been refined and made more concrete. Teachers would talk about value creation in the classroom, ask students to think about the question “For whom can this knowledge be valuable today?”. and have students seek out concrete recipients of value outside the classroom or school.

The work resulted in many good examples of students interacting with the surrounding community. Teachers reported strong positive effects on students’ knowledge, skills, motivation to study and values. Teachers’ reflections revealed that some had difficulties in understanding and applying the ideas.

The project ended in 2019 without being extended or entering the management phase. This was mainly due to changing political priorities regarding entrepreneurship in schools, nationally and regionally. Key people involved in the project had also left the municipality. However, many schools around Sweden have been inspired through stories shared on social media and through teachers’ inspirational lectures, and have built on the experience. The work has also been documented in research articles. One of the participating schools in the municipality has continued the work on its own initiative.

For the very first time, the project tested designed action sampling as a method. However, this was not a conscious choice. The 29 elements of the method emerged as one led to another. Only in retrospect did the project team and researchers realise the power of such a science approach. Impacts and challenges became more visible and could be analysed in more depth when the collected data was interpreted together with all participating teachers. The project team, school leaders and researchers could see in real time what was and was not done. They were able to analyse patterns, provide support to participants and make decisions about project actions. The analysis showed that value creation pedagogy needs to be tested practically with students for teachers to realise the power of the approach. The challenges were about changing teachers’ ways of working; the opportunities were about better utilising students’ potential.

Many participants did not see the point of written individual reflection or the value of a scientific approach. The project team did not initially recognise this value either. Several assignments were too theoretical and did not involve students in the classroom. Neither the project team nor the researchers knew at this stage how best to design tasks. It was also common that teachers did not carry out the practical tasks. Some did not believe in the idea, but most commonly, teachers in their busy lives forgot about the tasks or felt that it was scary to involve students. Overall, the project was only partially successful, but it built the foundation for everything that followed.

Written – collegial learning takes place mainly in text form

Designed action sampling is very much about written communication. Action-oriented tasks are formulated and explained in text form. They are then disseminated to all participants via a written form. Reflection after the tested task is collected in text form. Subsequent analyses also focus mainly on text. All in all, this means a much greater focus on text-based communication than for other scientific learning approaches in education.

Collegial learning has traditionally been conducted with a strong emphasis on oral communication. Teachers sit in many long discussion meetings where they discuss pedagogical and didactic issues. This enables deep understanding but makes it more difficult to analyse and disseminate insights.

Another approach to science in education is systematic quality work. It is mainly focused on numbers. These numbers are usually collected through surveys and from databases of grades and other statistics. The results are easy to disseminate, but the challenge is that the depth of understanding is often almost completely lacking. Numbers rarely tell us why things are the way they are. [34]

Only those scientific methods that allow quick and easy analysis of data have any prospect of working on a wider scale in a constantly time-pressured educational organisation. Therefore, numerical methods have dominated so far, even though they can rarely contribute to deeper understanding. The fact that designed action sampling is mainly based on written language is therefore a deliberate choice. Low time consumption in data analysis can be combined with good opportunities for in-depth understanding.

Another advantage is that insights can be easily and immediately shared and disseminated if they have already been written down when they were expressed. While oral communication allows for deeper understanding and is a good complement, converting speech to text reliably is time-consuming. This requires so-called transcription, listening to recorded oral communication and writing down what was said, word for word. One hour of oral dialogue usually takes 4-5 hours to transcribe and additional time to codify and analyse. This is necessary work if you want to call what you do scientific.

Compared with the options of oral and numerical communication, written language thus has the best combination of depth of understanding, time taken to analyse and disseminability, see Table 6.3. The least time taken to analyse is when written language is combined with numbers. For this reason, the designed action sampling form in Figure 4.2 contains both written text and various estimates that can be converted into numbers in the analysis phase to facilitate analysis.

Table 6.3 Comparison between oral, written and numerical communication.

| Oral communication | Written communication | Communication through numbers | |

| Depth of understanding | Very large | Large | Small |

| Time spent to produce data | Small | Small | Small |

| Time taken for analysis | Very large | Medium | Medium to small |

| Spreadability | Small | Medium to large | Large |

Different people have different levels of verbal, written and numerical communication skills. Some people find it easy to express themselves orally, they often speak up and are often heard in meetings. Others find it easier to express themselves in writing and prefer to sit on their own and work out formulations that are then shared with others. Still others like to do the maths and have a great fondness and aptitude for calculating various insights.

The experience so far of designed action sampling in practice is that a stronger focus on written communication improves analytical capacity and gives more participants the opportunity to be heard. It feels more like dialogue and analysis on equal terms. One challenge, however, is that not everyone finds it equally easy to express themselves in writing. Around 5 per cent of all people have dyslexia, a fact that is important to bear in mind in the primarily written-oriented designed action sampling. At the same time, experience to date suggests that written peer learning is still suitable for far more teachers than the oral equivalent, see example 6 below. It also saves time, mainly in the documentation and analysis phase, but also when you no longer need to generate all insights through oral group discussions.

Although designed action sampling is mainly based on written communication, both oral and numerical communication play important roles. Both when formulating action-oriented tasks and when analysing collected data, oral communication is a good way to let everyone speak and contribute with insights and experiences. Also, when collecting written reflections, it is always mandatory for the participants to also estimate the emotional state and effects. This allows in the subsequent analysis to quickly produce numbers on teachers’ emotional experiences and on teachers’ perceived effects. This then allows for a powerful combined numerical and textual analysis.

Example 6: Behaviour at the adult education centre

In 2019, a unit for municipal adult education applied designed action sampling in a competence development programme for all 39 employees in the unit. The aim was to strengthen the staff’s ability to provide good service and good treatment to each other and to the course participants. An expert met the staff on four occasions for lectures and workshops. In between, staff were given different tasks, such as giving positive feedback to a colleague, treating a colleague with respect, improving any aspect of their communication and reflecting on different attitudes in the workplace. An initial assignment also captured staff expectations for the first session. Tags captured outcomes such as ‘More people taking more responsibility’, ‘Being listened to’, ‘We help each other succeed’ and ‘Improved communication’. The tags were designed by the school leaders themselves by discussing what effects they wanted to achieve with the intervention.

School leaders felt that they could monitor staff learning and development in a way that had not been possible with previous interventions. The action-oriented tasks resulted in a collective behavioural change in the workplace. This change could be monitored during the intervention and at the individual level through staff members’ confidential reflections to the school leaders. Employees who were otherwise often silent gradually dared to speak up more than usual at the meetings, as they had been confirmed by the school leaders through written feedback on the reflections.

Overall, the intervention had a more profound impact on employees than a lecture series usually does. Everyone was given a voice, not just those who talk a lot. This gave school leaders a more complete picture of the situation in the workplace, which was felt to lead to better decisions, more efficient use of resources and a clearer picture of each employee’s development. It was also easier for school leaders to assess the impact of the intervention on the workplace.

Designed action sampling has become a regular feature of the unit. However, the main responsibility for designing tasks, following employees in their learning and giving them feedback has been taken over by the team leaders.

Confidential – only a few read everyone’s reflections

In designed action sampling, we should avoid sharing all teachers’ reflections openly with everyone, even within a single team. This can lead to many teachers writing what they think is expected by the organisational or group culture rather than how they feel deep down, which leads to poorer data and a lower reliability of the research work. The reason for this is that group dynamics greatly influence what ideas are shared and how they are presented.

Various group dynamic phenomena are a central theme in the work of researchers Argyris and Schön on organisational learning. These include phenomena such as defensive colleagues, concealment of differences and failures, competitive mentality, reluctance to change, rivalry, political games, territorial thinking, groupthink and protection of each other.[35] Argyris and Schön (1978) have proposed a vision for a learning organisation called model two, see Figure 6.9. They compare this vision with a model one organisation, a limited learning organisation. The possibility of achieving the vision of a model two organisation depends to a large extent on how well one succeeds in suppressing and neutralising various naturally occurring harmful group dynamic phenomena. Argyris and Schön succinctly state that virtually all the organisations they studied operate primarily in line with model one.

Figure 6.9 Model Two is a vision for a learning organisation proposed by researchers Chris Argyris and Donald Schön in the 1970s (Argyris, 2002; Argyris & Schön, 1978). When people in an organisation behave according to model two, it becomes possible to take a big step towards becoming a learning organisation.

Confidential sharing of teachers’ reflections in small groups has been shown to help move towards the vision of a model two organisation. If the group that gets to read teachers’ reflections is around one to three people instead of around ten to thirty, it can be expected that many problematic group dynamic phenomena are mitigated or completely absent. Over time, a culture more in line with model two organisations is established.

It is not surprising that intimate dialogues in small groups can provide a breeding ground for a model two learning organisation. The vast majority of people find it easier to share their inner feelings, admit their mistakes and risk revealing their supposed incompetence to one to three people, rather than ten to thirty. Being fully honest, showing vulnerability and trust is simply easier in small intimate groups than in large crowds.

The fact that reflection and confidential dialogue takes place in small groups of around three people does not prevent really interesting reflections from being disseminated more widely in the organisation. The important thing is that everyone can trust that such dissemination takes place while maintaining anonymity. Sure, some staff members may try to figure out who wrote something that has been shared with everyone, but the feeling is different when no one can be sure who wrote a particularly revealing, emotional or critical reflection. Putting sensitive issues on paper under confidentiality becomes a way of bridging the gap between confidential small group discussions, which would otherwise be mainly oral, and the need for a whole organisation to be well informed. Only then can everyone participate and learn from each other’s various failures, dilemmas and shortcomings. And, of course, from all successful attempts to create value for students. Many teachers also feel that they have greater access to their managers when they can discuss sensitive issues in written confidentiality, provided there is confidence that trust will not be abused.

In addition to group dynamics, there are also time benefits to not having everyone read everything. It is simply more time efficient for a small group of peer learning leaders to read all the reflections, engage in a confidential dialogue and provide feedback to all, and then compile the most interesting insights in a condensed form for all to see. As all reflections are also available word-by-word in written form, particularly interesting quotes can be extracted, anonymised and shown to everyone in the final analysis phase. This reduces the risk of important insights being filtered and distorted orally. Numerical statistics from emotion ratings and tags also give all participants an overall sense of the big picture, even if they have not read everyone’s reflections.

[1] For some different Swedish definitions of action research, see Rönnerman (2000, s. 14), Tallvid (2010, s. 66) and Huisman (2006). For some international definitions, see Altrichter et al. (2002) and Coghlan and Shani (2014).

[2] In the Nordic region, the Nordic Network for Action Research (NNAF) has existed since 2004 and since 2010 an annual well-attended network conference, Noralf.

[3] A Swedish summary of the criticism has been made by Huisman (2006). An international review of challenges has been made by Pring (2010). Several challenges are also listed by Coghlan and Shani (2014) and by Wedekind (1997).

[4] For an illustrative example of the challenges of co-operation, see Waters-Adams (1994).

[5] For a fascinating description of the history of the experiment, see Shadish et al. (2001).

[6] For a Swedish discussion of this issue, see the thesis by Levinsson (2013).

[7] See for example Slavin (2002).

[8] See for example Biesta (2007) and Olson (2004).

[9] For a discussion on the difficulty of studying meaning-making subjects, see Sayer (2010, s. 196).

[10] The roots of design science are traced by Dresch et al. (2015) all the way back to Leonardo Da Vinci’s engineering in the 15th century, but Simon’s book is still considered the starting point.

[11] This is described in a well-quoted phrase by Simon (1969, s. 111): “Everyone designs who devises courses of action aimed at changing existing situations into preferred ones.”

[12] Although social science also often deals with artificially created phenomena, social science research methods are usually derived from the natural sciences, which is a problem in itself. See for example Mirowski (1991).

[13] For a review of pragmatism and design science, see Romme (2003).

[14] For a review of abduction in design science, see Dresch et al. (2015, s. 61-62).

[15] This is described in more detail by Dresch et al. (2015, s. 111) and by Romme and Endenburg (2006, s. 288-289).

[16] The steps are described in more detail in a paper by van Burg (2010, s. 15).

[17] This is discussed by Romme and Endenburg (2006, s. 288-289).

[18] A book by Dresch et al. (2015) describes in detail traditional and design-based research.

[19] The methodological literature in design science contains many detailed process descriptions. For an overview, see Dresch et al. (2015, s. 67-102). Van Aken and Romme (2009) also describe a five-step model consisting of (1) select a practical problem, (2) search literature, (3) synthesise, (4) create design principles and (5) test and develop them further.

[20] Romme (2003, s. 567-568) describes in more detail how design principles facilitate dialogue and collaboration between researchers and practitioners.

[21] See for example Härdevik (2008, s. 87-88, 121) and Åstrand (2018, s. 171).

[22] Read more in Thaler and Sunstein (2009). A classic example of nudging is to paint a fly in the urinal to get men to spot the right one.

[23] The idea of the elephant carpaccio originally came from consultant Alistair Cockburn and was originally about slicing up a user story into small, small parts that then become the functionality of an IT system.

[24] This question is taken from the constructive alignment assessment theory by Biggs and Tang. (2011), read more in Lackéus and Sävetun (2019b, p. 15).

[25] The Teachers’ Association and the National Union of Teachers (2006, s. 8) write in a publication on teachers’ professional ethics: “Teachers undertake to take responsibility for developing their skills in their professional practice.”

[26] Read more about this in Katz and Ain Dack (2017).

[27] Read more about this in Härdevik (2008).

[28] Jarl et al. (2017, s. 119) writes about unsuccessful schools, where a “self is best, alone is strong” structure is common.

[29] For a modern review of original sin, see Grantén (2013). See also de Botton (2013, s. 92, 117, 142).

[30] See Sjöblom and Jensinger (2020, s. 163-164) for a review of internal and external accountability based on the Fullan and Quinn (2015) coherence model. (2015).

[31] This requires us to have an ability called ambidexterity; to be able to balance between everyday routines and development work see Lackeus, Lundqvist, Williams Middleton and Inden (2020, s. 14).

[32] This assessment method has been shown to work well for action-based learning, read more about this in Lackéus and Williams-Middleton. (2018).

[33] Read more about this in Katz and Ain Dack (2017, s. 100-104).

[34] For some challenges with surveys, see Phellas et al. (2011) and Kelley et al. (2003).

[35] Argyris and Schön (1978, s. 119-127) write about these and many other group dynamic phenomena and how they affect organisational learning.